Loading

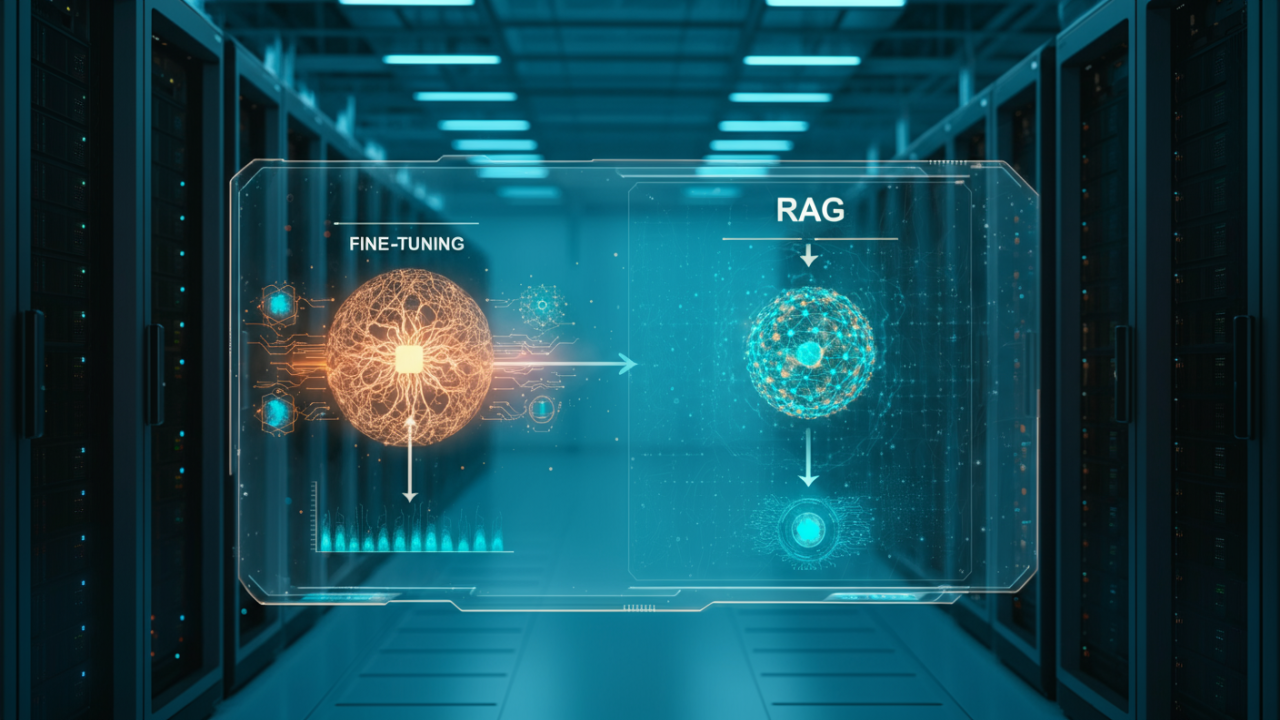

In the burgeoning landscape of Artificial Intelligence, organisations often need to tailor large language models (LLMs) to their specific requirements. Two prominent techniques for achieving this customisation are Fine-Tuning and Retrieval Augmented Generation (RAG). Understanding the nuances of each is crucial for making informed strategic decisions that align with your business objectives and available resources.

Fine-tuning involves taking a pre-trained foundational model and further training it on a smaller, task-specific dataset. This process adjusts the model's internal weights, allowing it to better understand and generate content aligned with a unique domain, brand voice, or specific task. The result is a model that has genuinely internalised new patterns and behaviours, moving beyond its initial generalist capabilities. This deeper integration can lead to highly nuanced and contextually aware outputs, but it demands significant computational resources and a substantial volume of high-quality, labelled training data.

Retrieval Augmented Generation, conversely, keeps the foundational model largely intact but augments its capabilities by providing it with access to an external knowledge base during inference. When a query is made, a retriever component fetches relevant information from this external data source – which could be documents, databases, or web content. This retrieved information is then fed alongside the user's prompt to the LLM, enabling it to generate responses that are grounded in current, specific, and often proprietary data. RAG is particularly effective for scenarios requiring up-to-date information, factual accuracy, and reduced hallucinations, as the model references real-time data rather than relying solely on its pre-trained knowledge.

The primary distinction between these approaches lies in their data requirements and operational expenditure. Fine-tuning necessitates a large, meticulously curated, and often domain-specific dataset for retraining, which can be time-consuming and expensive to prepare. It also incurs considerable computational costs during the retraining phase, requiring powerful GPUs for extended periods. RAG, while still needing a well-structured and indexed knowledge base, typically does not require retraining the core LLM, thus avoiding those intensive computational cycles. Its costs are more associated with maintaining and updating the retrieval infrastructure and the external data source, alongside the ongoing inference costs of the LLM itself.

Fine-tuning is the optimal choice when your primary goal is to fundamentally alter the model's behaviour, style, or underlying understanding for a very specific, consistent task. If your organisation requires the LLM to adopt a unique brand voice, master highly specialised jargon, or perform tasks where existing models consistently fall short even with external context, fine-tuning is appropriate. Consider this path if you have access to a large, consistent dataset that accurately reflects the desired model output, and if the changes required are deeply embedded in the model's 'personality' rather than merely factual recall. This approach is about teaching the model new skills, not just providing it with new facts.

RAG shines in scenarios where the LLM needs to access dynamic, frequently updated, or proprietary information without undergoing extensive retraining. It's ideal for applications demanding high factual accuracy, explainability (as sources can often be cited), and a reduction in AI hallucinations. Use RAG when your data is constantly evolving, such as internal company documents, product catalogues, or real-time market data, and you need the LLM to retrieve and synthesise information from these sources. This method allows models to stay current and provides a robust solution for knowledge-intensive tasks where the source of truth resides outside the model's initial training data.

Discover how our bespoke AI solutions can integrate Fine-Tuning or RAG into your operations.

Explore Our AI Services